Executive Snapshot: The Optus Breach in Plain Sight

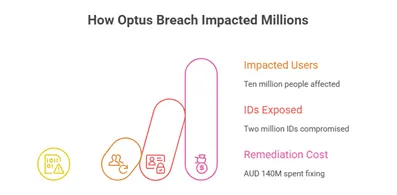

In September 2022, Australia woke up to the largest data breach in its history. Optus, the country’s second-largest telecom disclosed that the personal information of nearly 10 million people had been exposed. To put that in perspective, that’s almost 40% of the entire population.

Among the data spilled were 2.1 million government-issued IDs – passports, driver’s licenses, Medicare cards – the kind of information that isn’t just sensitive, but life-defining. Within days, a ransom note for AUD 1.5 million in Monero appeared on a darknet forum. The attacker later deleted the post and even issued a strange “apology”, but by then the damage was done.

The fallout was swift and brutal:

- The Office of the Australian Information Commissioner (OAIC) launched an investigation.

- Lawsuits and regulatory heat mounted.

- Optus earmarked AUD 140 million for remediation.

- And eventually, the CEO stepped down.

This wasn’t just another corporate security failure. It became a global case study in API security.

Why it matters

The Optus breach shattered two dangerous myths in cybersecurity:

1.That you can only be hacked by highly sophisticated attackers.

2.That compliance checklists are enough protection.

Neither was true here. What happened was far more ordinary, and that’s what makes it so frightening.

Quick lessons upfront

- You cannot secure APIs you don’t know exist. Shadow APIs are a silent killer.

- Business logic flaws are deadlier than hackers. Weak authorization and enumeration errors did more damage than malware ever could.

- Regulators don’t care about excuses. Calling an incident “a sophisticated attack” doesn’t soften the blow. If anything, it makes you look less accountable.

And with that, let’s get into the first, and perhaps the most important lesson.

Lesson 1: You Cannot Defend What You Don’t Know

Every company knows about the APIs they build, maintain, and publish. But what about the ones they’ve forgotten? The test endpoints, staging leftovers, or APIs spun up years ago that never got decommissioned? These are shadow APIs, and they’re the silent cracks attackers look for.

The blind spot

In Optus’s case, an API endpoint had been left exposed on an unused subdomain. It wasn’t monitored. It wasn’t patched. Worst of all, it didn’t require authentication. That single oversight became the root entry point for a breach affecting millions.

This wasn’t an elite, nation-state hack. It was the digital equivalent of finding a back door left unlocked.

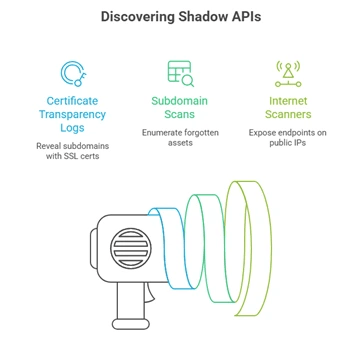

How attackers actually find these APIs

Here’s the uncomfortable truth: finding shadow APIs is often easier than we think.

- Certificate Transparency logs can reveal hidden subdomains where SSL certs were issued.

- Subdomain scans (using tools like subfinder) can enumerate forgotten assets in minutes.

- Shodan and other internet-wide scanners regularly expose endpoints sitting quietly on public IPs.

Attackers don’t need to break in. They just need to look in the places we forget to check.

Remedy: Shine a light before someone else does

Securing APIs isn’t about one-time audits. It’s about continuous discovery. That means:

- Runtime discovery: monitoring actual API traffic to see what’s live.

- Passive scanning: running network discovery tools to catch forgotten subdomains.

- Classification: once APIs are found, clearly mark them as internal vs. public and sensitive vs. non-sensitive.

And yes, this can be as simple as combining open-source tools and basic scripts:

# Runtime API endpoint monitoring

curl -X GET https://api.myorganization.com/endpoints | jq ‘.endpoints[] | {path, method, auth_required}’

# Subdomain discovery

subfinder -d myorganization.com -silent

# Shodan network scan (requires API key)

curl -X POST “https://api.shodan.io/shodan/scan?key=YOUR_API_KEY” -d ‘ips=203.0.113.0/24′

None of these are “advanced attacks”. They’re just how the internet works. Which is why Lesson 1 is simple but absolute: if you don’t know every API in your environment, you cannot defend your business.

Check out:

Lesson 2: Authentication Isn’t Optional

If Lesson 1 was about knowing your attack surface, Lesson 2 is about guarding the doors properly. Optus failed on the most fundamental security control of all: authentication.

The flaw in plain sight

At the center of the breach was an API endpoint that didn’t require a token, key, or login. Anyone on the internet could send an anonymous GET request and receive sensitive customer records in return. No username. No password. Just data flowing freely.

This wasn’t a zero-day exploit. It was the absence of a lock.

Technical unpack: how it was exploited

The exposed API suffered from Broken Object Level Authorization (BOLA), also known as Insecure Direct Object Reference (IDOR). In simple terms:

- The API URL may have looked like /users/{userId}.

- There were no server-side checks to confirm whether the requester was allowed to access that user’s data.

- Attackers discovered they could increment IDs (e.g., 5332 → 5333 → 5334) and retrieve records for every customer.

A basic Python loop was enough to exfiltrate massive amounts of data (Illustrative example):

import requests

for user_id in range(5000, 6000):

r = requests.get(f“https://api.optus.com/users/{user_id}”)

if r.status_code == 200:

print(r.json())

No brute force. No malware. Just enumeration.

Remedy: how to make this impossible

- Server-side authorization checks: Every API call must validate not just that the request is authenticated, but that the requester has permission to access the object in question.

- Strong authentication: API gateways should enforce OAuth2 or JWT tokens. No token, no entry.

- Rate limiting: Prevent attackers from running infinite loops by detecting unusual enumeration attempts and throttling requests.

Optus’s oversight here wasn’t about an attacker being smarter. It was about a company being careless. Which leads us to the next painful lesson.

Wondering how to build secure APIs as a developer? Check out this guide.

Lesson 3: “Sophisticated Attack” Is Often Just Bad Hygiene

When the breach was first announced, Optus described it as the work of a “sophisticated attack”. That framing didn’t survive for long. Security researchers quickly debunked the claim, showing it was caused by a basic misconfiguration and lack of fundamental controls.

The credibility gap

This mismatch between words and reality made things worse. Customers felt misled. Regulators smelled blood. And the media narrative shifted from “company under siege” to “company hiding negligence”.

The fallout? A CEO resignation. A provision of AUD 140 million for legal and remediation costs. And a permanent stain on Optus’s credibility.

The deeper lesson

It’s tempting for organizations to spin incidents as advanced nation-state-level hacks. But the truth almost always comes out – and when it does, the cover-up hurts more than the breach.

Transparency, even when painful, is the safer long-term strategy. Customers and regulators don’t expect perfection. They do expect honesty.

Remedy: preparing for the hard conversations

- Root-cause-based communications: Never speculate or blame “sophisticated hackers” until forensics confirm it.

- Tabletop exercises: Run breach disclosure drills not just for the technical response, but for the communications response. Practice explaining failures openly and consistently.

If Lesson 2 showed how a missing lock can bring a company down, Lesson 3 shows how a poor explanation can finish the job. And that brings us to the next frontier: how business logic flaws, not malware, are often the deadliest attack vector.

Here’s a guide to prevent this: API Security in Action PDF

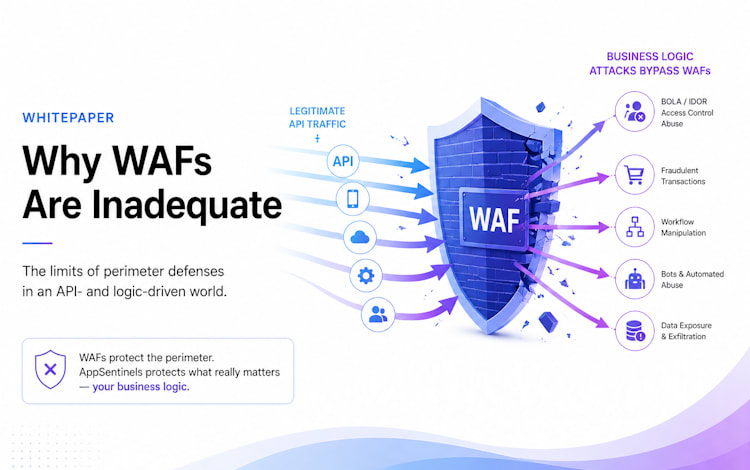

Lesson 4: Business Logic Flaws Beat Firewalls

If authentication is the lock, business logic is the rulebook, and Optus forgot to enforce it. The breach wasn’t about malware or exploits; it was about processes the API allowed.

The flaw in plain sight

Attackers didn’t need a zero-day. They abused predictable workflows and missing per-object checks to enumerate customer records:

- Incrementing IDs (contactid=5332 → 5333 → 5334) pulled data at scale.

- APIs blindly trusted the request parameters instead of enforcing per-object authorization.

Examples of business logic abuse:

- Mass assignment: APIs that accept a JSON object and update all fields without validation. Attackers can overwrite fields they shouldn’t touch.

- Workflow abuse: Multi-step processes that skip verification, payment, or approval checks, letting attackers bypass normal safeguards.

Technical pseudocode:

import requests

for user_id in range(5000, 6000):

r = requests.get(f“https://api.optus.com/customer?contactid={user_id}”)

if r.status_code == 200:

print(r.json())

Without per-object authorization, this simple loop exposes data for thousands of users instantly.

Remedy: stop logic from being a vulnerability

- Negative test cases in QA: Simulate abuse scenarios to ensure APIs reject unauthorized actions.

- API security testing: Fuzzing and automated Postman/Newman scripts help uncover flaws before production.

- Process reviews: Map workflows end-to-end and enforce checks at every step. Don’t trust client input.

Business logic flaws don’t care about firewalls. They live in the trust your code gives away.

Lesson 5: Excessive Data Exposure Multiplies Impact

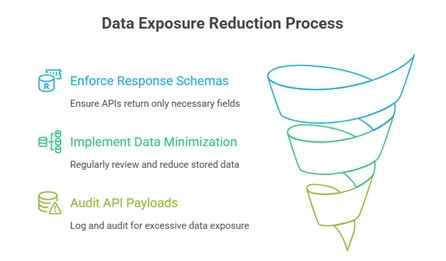

Even if authentication and logic were perfect, Optus made another critical mistake: too much data was returned per request.

The flaw in plain sight

APIs should follow least privilege, returning only what’s necessary. Instead, the Optus endpoint returned full PII objects, including:

- Driver’s license numbers

- Passport numbers

- Date of birth

- Addresses

This multiplied the impact. Millions of users’ sensitive identity documents were suddenly in the attacker’s hands.

Example JSON snippet:

Full (bad):

{

“name”: “Jane Doe”,

“email”: “jane.doe@example.com”,

“address”: “123 Main St, Sydney”,

“dob”: “1990-01-01”,

“driver_license”: “NSW1234567”,

“passport”: “N1234567”

}

Redacted (good):

{

“name”: “Jane Doe”,

“email”: “jane.doe@example.com”

}

Remedy: trim what you expose

- Response schema enforcement: Ensure APIs return only fields needed for the operation.

- Data minimization policies: Regularly review stored data and reduce what’s exposed.

- Real-world check: Log your largest API payloads and audit for excessive data exposure.

Exposing everything turns a small leak into a catastrophe. The principle is simple: give only what’s needed, nothing more.

Lesson 6: Monitoring Gaps Let Attackers Roam Free

One of the starkest failures in the Optus breach was detection. The company didn’t notice the massive data exfiltration happening through its own APIs. The first public sign of trouble? The attacker announcing the breach on a dark web forum.

Missed signals:

- Sequential API requests quietly harvesting customer IDs.

- Unusual spikes in GET request volumes fetching sensitive data.

Detection recipes (engineer-friendly):

- ELK – flag sequential ID requests:

{

“query”: {

“bool”: {

“must”: [

{ “range”: { “@timestamp”: { “gte”: “now-1d/d” } } },

{ “script”: { “script”: “doc[‘userId’].value – doc[‘userId’].value – 1 == 1” } }

]

}

}

}

- Splunk SPL – monitor abnormal data sizes per user:

index=api_logs | stats sum(bytes) as totalBytes by user | where totalBytes > <threshold>

Remedy:

- Continuous API observability – logs, distributed tracing, and real-time metrics.

- Deploy honeytokens – seed fake IDs to trigger alerts if accessed.

- Set up anomaly detection to catch unusual access patterns or data exfiltration.

If you wish to learn more about this, read this resource: ‘Detect API Abuse’.

Bottom line: If you can’t see what’s happening on your APIs, attackers can roam free without leaving a trace. Monitoring isn’t optional. It’s your last line of defense.

Lesson 7: Regulators and Class Actions Hit Hard

Once a breach goes public, the fallout is swift and expensive. Optus faced dual pressure: regulatory investigations and class-action lawsuits.

Regulatory investigations:

- OAIC and ACMA launched probes into Optus’s practices and breach response.

Class actions:

- Multiple lawsuits representing over 100,000 affected customers sought damages and remediation.

Financial & reputational impact:

- Optus allocated A$140 million for breach-related costs.

- Brand trust collapsed.

- CEO resigned in March 2023.

Lesson: The cost of a breach far exceeds the cost of prevention. Skimping on API security and monitoring isn’t just a technical risk. It’s a business risk.

Remedy:

- Treat all APIs handling PII as regulated data stores.

- Adopt compliance-by-design. Embed security, privacy, and regulatory standards throughout development.

- Maintain audit trails and documentation to demonstrate adherence to frameworks like NIST 800-53, GDPR, and OAIC guidelines.

Insight: Prevention isn’t optional. Every unsecured API is a potential regulator and litigation-triggering liability.

Lesson 8: Incident Response Can Save or Sink You

One of the most critical takeaways from the Optus breach is how timing and clarity of response can make or break an organization.

Optus’s disclosure: Suspicious activity was detected internally around September 20–21, 2022, but public disclosure didn’t happen until September 22. Early communications were inconsistent, contradictory, and lacking clarity – eroding trust almost instantly.

Lesson: The first 72 hours are crucial. Immediate, transparent, and coordinated responses limit reputational damage, regulatory scrutiny, and customer backlash.

Template incident response checklist:

- DFIR engagement: Activate Digital Forensics & Incident Response teams to map the breach, determine affected systems, and trace attacker methods.

- Regulator notification: Meet legal notification deadlines (OAIC requires 72 hours).

- Customer communications: Draft clear, concise, and accurate messages explaining what happened and next steps.

Real-world contrast: Companies like Microsoft or Salesforce that disclosed breaches quickly and transparently maintained customer trust and navigated regulators more effectively than those with delayed or evasive responses.

Takeaway: Slow or muddled response amplifies damage. Speed, transparency, and coordination are your defensive weapons.

Lesson 9: Prevention Requires Phased Remediation

Prevention isn’t a single action. It’s a phased process. Optus’s aftermath highlights what any organization should do immediately and over time.

Recommended phases:

- Day 0–7: Emergency API inventory, enforce gateway rules, block risky endpoints.

curl -X GET https://api.example.com/endpoints | jq ‘.endpoints[] | {path, method}’

- 30 days: Rollout OAuth2/JWT for authentication, enforce rate limits, run object-level authorization tests.

rate_limit:

requests_per_minute: 60

- 90 days: Integrate CI/CD API security scans, schema validation, and automated tests.

semgrep –config=auto path/to/api/code/

- 6–12 months: Adopt Zero Trust API design, runtime protection, anomaly detection, and micro-segmentation.

- Optional: Fuzz endpoints with tools like OWASP Zap during testing cycles.

Insight: Treat prevention as a continuous, phased journey rather than a one-off project. Early containment plus structured long-term remediation drastically reduces future risk.

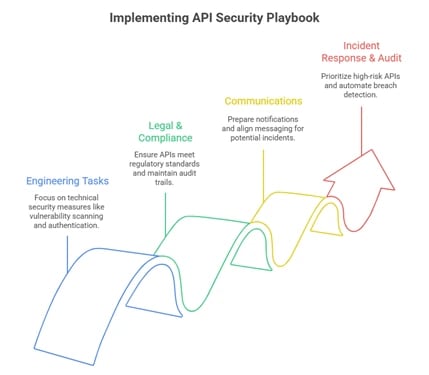

Lesson 10: The CISO Playbook Must Be API-First

If there’s one lesson from the Optus breach, it’s this: CISOs can no longer treat APIs as “just another IT system”. APIs are the lifeblood of modern digital services. They connect partners, apps, and internal systems, often carrying the most sensitive customer data. When neglected, even a tiny oversight – like a forgotten endpoint or a missing authorization check – can snowball into a 10-million-person breach.

After walking through lessons on shadow APIs, broken object-level authorization, excessive data exposure, and monitoring gaps, the picture is clear: CISOs need a proactive, API-first playbook. Waiting until a breach happens is too late.

Here’s what a strong, practical API-first approach looks like:

- Know your APIs, inside and out: Conduct continuous discovery, map endpoints to their data sensitivity, and keep an up-to-date inventory. No shadow API should be left lurking.

- Enforce strong access controls: Every API call must check who’s requesting what. Implement OAuth2 or JWT authentication, per-object authorization, and rate limits to block automated enumeration.

- Test like an attacker: Incorporate negative test cases, fuzzing, and CI/CD security scans to catch business logic flaws before they reach production.

- Plan for breach scenarios: Pre-draft regulator and customer communications, engage your DFIR team immediately if anything suspicious pops up, and have tabletop exercises to rehearse disclosure messaging.

- Monitor everything: Logs, traces, and real-time metrics are your early warning system. Honeytokens and anomaly detection help you catch unusual patterns before attackers self-announce on the dark web.

Think of it as a 15–20 step checklist, spanning engineering, legal, and communications tasks. Copy-paste style execution is useful, but understanding the “why” behind each step: why missing one authorization check or leaving a stale endpoint can bring down the whole company, is what separates proactive CISOs from reactive ones.

Also read:

- Why API Security Can’t Wait: Protecting Your Business in an API-Driven World

- Enhancing API Security with Automated Threat Detection

How AppSentinels Helps Organizations Avoid Optus-Style Breaches

Modern API ecosystems are sprawling, dynamic, and prone to human error. That’s where AppSentinels turns API security from a blind spot into a board-level strength.

Here’s how it works:

- Continuous API Discovery: Maps every endpoint – active, staging, legacy, shadow, orphan, unused, and LLM APIs – with automated classification and real-time risk scoring so nothing goes unnoticed.

- Granular Authorization Controls: Enforces object-level checks to prevent BOLA/IDOR vulnerabilities, with continuous automated pen-testing of workflows and business logic by autonomous agents working 24×7.

- Real-Time Monitoring & Threat Detection: AI-driven defense detects enumeration, abnormal API use, bot attacks, fraud attempts, and data exfiltration 24/7, with MITRE-aligned threat analytics.

- Data Exposure Controls: Automated sensitive/PII exposure detection ensures APIs return only the minimum necessary data – no full passports, no unnecessary PII.

- Compliance-Ready Reports: Generates audit-ready outputs for OAIC, GDPR, PCI DSS, HIPAA, and more, with governance and misconfiguration insights that make regulator communication straightforward.

With AppSentinels, API security isn’t just about preventing breaches. It’s about building confidence with your board, regulators, and customers – now with critical safeguards for AI-era risks like rogue agents and logic exploitation. Lessons from Optus show what gaps can cost; AppSentinels is the playbook that ensures those gaps never exist.

Frequently Asked Questions about the Optus Data Breach

Q1.Who was behind the Optus data breach?

The attacker was never formally identified. Evidence suggests it was an opportunistic actor exploiting a publicly exposed API, not a sophisticated nation-state or advanced cybercrime group.

Q2.How was the breach discovered?

Optus didn’t detect it internally. The attack came to light when the perpetrator posted samples of stolen customer data on a breach forum, demanding ransom.

Q3What types of ID documents were exposed?

Highly sensitive data was compromised: passports, driver’s licences, Medicare numbers, and VicRoads-issued IDs. Around 2.8 million customers had at least one government-issued document exposed.

Q4. What should affected customers do?

- Check your status with Optus’ official breach tool.

- Register with IDCARE, the government-backed identity support service.

- Replace compromised passports, licences, or Medicare cards.

- Monitor financial accounts for suspicious activity.

Q5. What was the Australian government’s response?

Emergency legislation allowed fast-tracked replacement of identity documents and increased data-sharing with banks to protect customers. Regulators like OAIC and ACMA launched investigations.

Q6. What legal actions followed?

Multiple class actions were filed by firms such as Slater & Gordon and Maurice Blackburn, representing millions of affected customers. Cases are ongoing, with potential compensation still under review.

Q7. How much did the breach cost Optus?

Optus reserved around A$140 million for customer support, ID replacement, and legal provisions. Long-term costs, including fines, reputational damage, and regulatory scrutiny, could be much higher.

Q8. Did Optus face fines or penalties?

OAIC has commenced civil penalty proceedings for serious and repeated privacy violations. Potential fines could reach hundreds of millions if the court rules against Optus.

Q9. How does this breach compare to others, like Equifax?

Both are stark reminders that basic security lapses – misconfigured APIs in Optus, unpatched software in Equifax – can trigger massive fallout. Scale and sensitivity make these incidents defining case studies.

Q10. What are the broader impacts?

Customer trust eroded, regulatory scrutiny intensified, privacy law debates escalated, and identity document replacement costs rose sharply.

Q11. What ethical issues arose?

Optus’ initial claim of a “sophisticated attack” was disproven, highlighting risks of spin, transparency failure, and corporate responsibility lapses.

By now, the lessons are clear. The Optus breach wasn’t caused by a shadowy hacker with superpowers. It was the result of overlooked APIs, missing authentication, business logic flaws, excessive data exposure, and slow responses. Each misstep multiplied risk, drawing regulatory scrutiny, class actions, and reputational damage.

This isn’t just a story about Optus; it’s a warning shot for every organization handling sensitive data. Every API, every endpoint, every piece of personal information is a potential target. How you monitor, secure, and respond can mean the difference between a contained incident and a headline-making disaster. With the right mindset, tools, and proactive measures, you can prevent history from repeating itself.

Conclusion: The Optus Breach Is Everyone’s Warning Shot

The Optus breach isn’t just a cautionary tale. It’s a wake-up call. Hidden APIs quietly exposed millions of customers’ sensitive data. Missing authentication checks and business logic flaws made it shockingly easy for attackers to collect full ID documents. And because monitoring wasn’t up to scratch, the breach went unnoticed until it was publicly announced.

Slow, inconsistent communication only made things worse. Regulators moved in, class actions followed, costs soared, and trust took a massive hit.

So what does this teach us? You can’t protect what you don’t know exists. Authentication isn’t optional. Business logic flaws can be deadlier than the hackers themselves. Data exposure multiplies risk. Monitoring, transparency, and rapid incident response aren’t just nice-to-haves. They’re survival skills.

And prevention? It’s a phased, deliberate process that must start at the very top: API security is a board-level strategy, not a side project. Treat it with the same urgency you would a financial audit or compliance requirement.

Because the next breach is already waiting, and the only question is: are you ready?